GameDevBench

Evaluating Agentic Capabilities Through Game Development

GameDevBench

Evaluating Agentic Capabilities Through Game Development

1Carnegie Mellon University 2Princeton University

TL;DR

- GameDevBench is the first benchmark for evaluating LM agents on game development tasks.

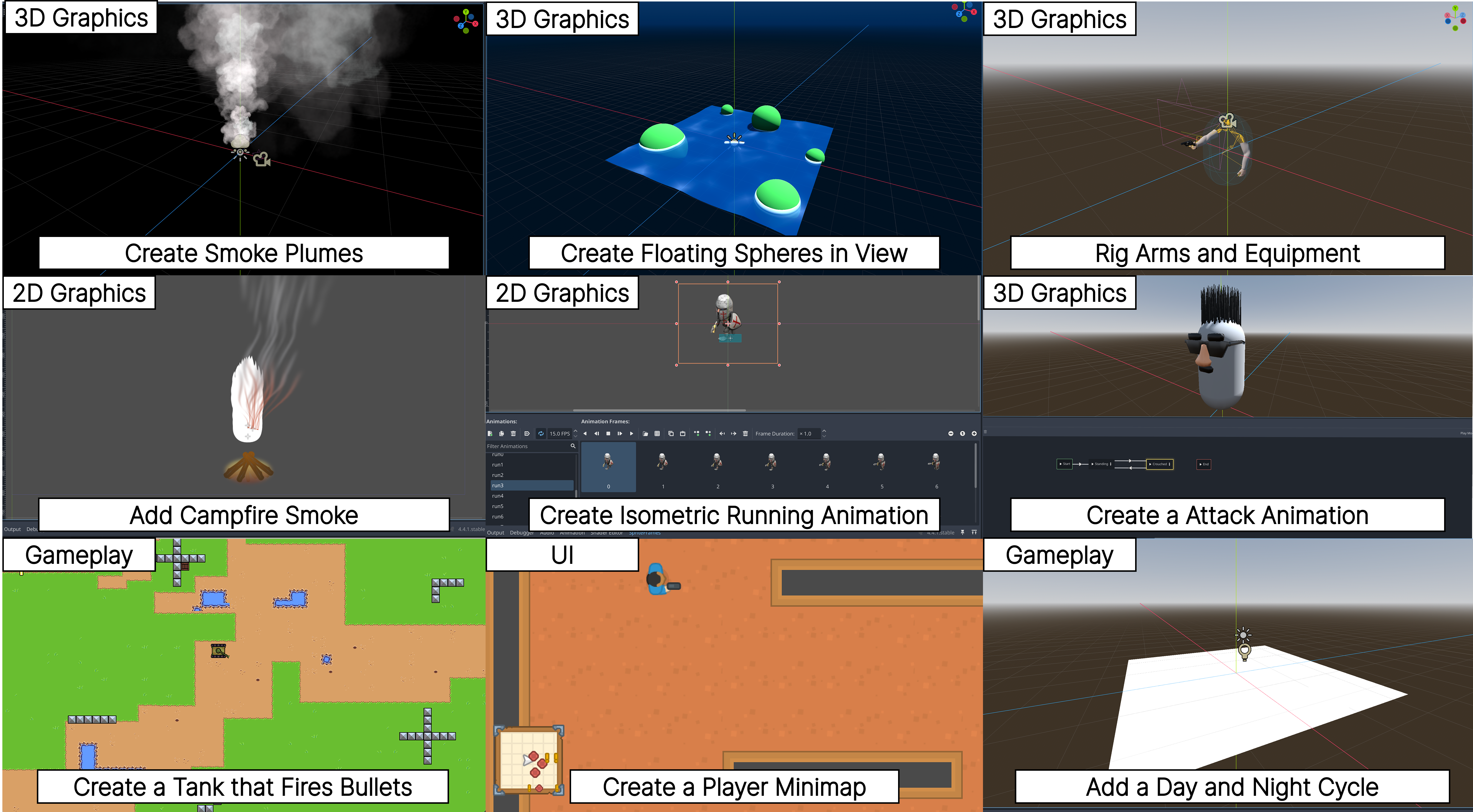

- GameDevBench features 132 tasks set in the Godot engine, collected from web and video tutorials across four skill categories: gameplay logic (collision detectors, character controllers), 3D graphics and animation (material tuning, skeletal animation), 2D graphics and animation (sprite animation, TileMap setup), and user interface (HUD layout, menu navigation).

- Tasks require agents to navigate large codebases and manipulate multimodal assets such as shaders, sprites, and animations.

- GameDevBench shows that even simple multimodal feedback can drastically improve performance.

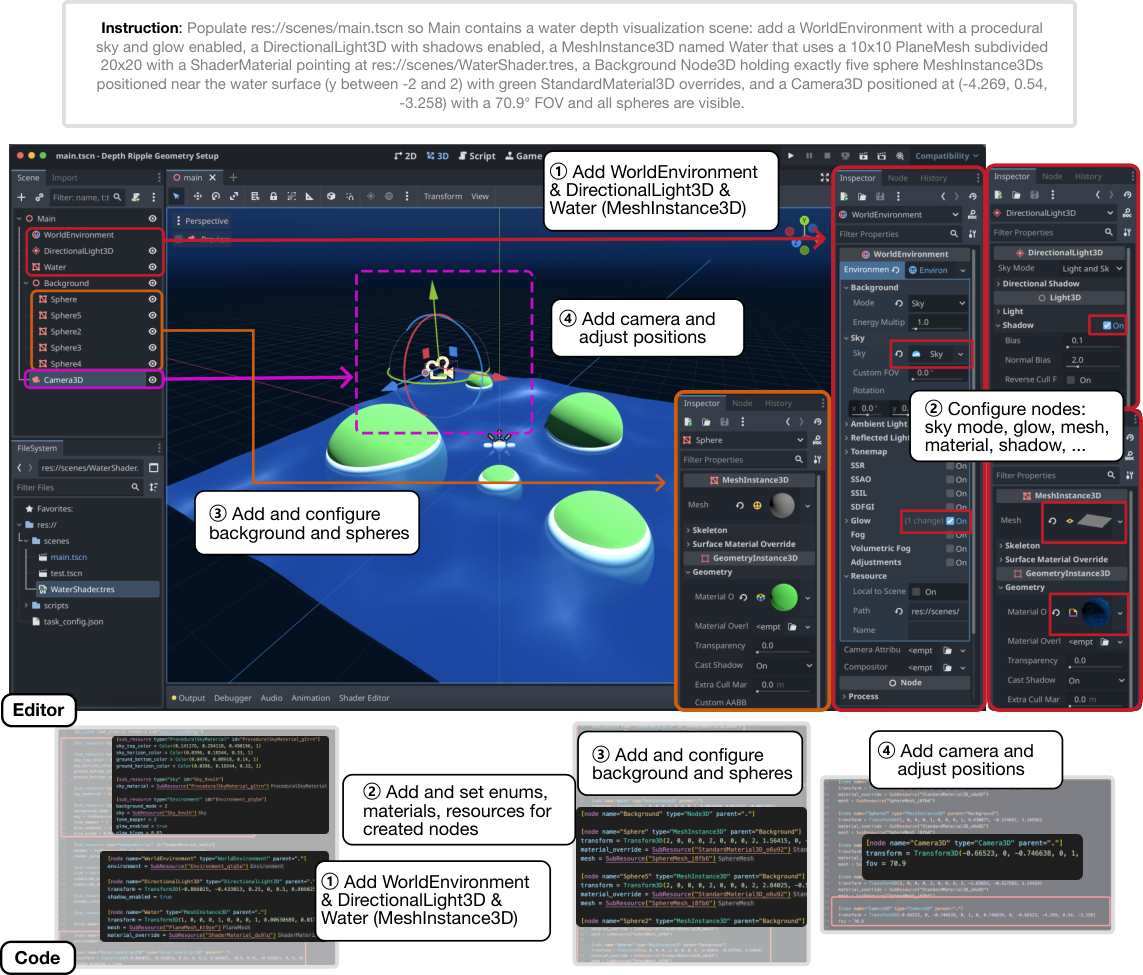

Example Task

In this example, the goal is to populate an empty 3D scene with a water depth visualization, including environment lighting, shader-driven water plane, background spheres, and a camera. This is a 3D graphics and animations task that focuses on the scene editor. The figure shows both the editor-based and code-based solution approaches.

Leaderboard

| Rank | Model | Org | Framework | Score |

|---|---|---|---|---|

| 1 | gpt-5.3-codex-high | OpenAI | Codex | 59.1 |

| 2 | gemini-3-pro-preview | Gemini CLI | 54.5 | |

| 3 | gemini-3-flash-preview | Gemini CLI | 52.3 | |

| 4 | claude-sonnet-4-5-20250929 | Anthropic | OpenHands | 51.1 |

| 5 | claude-opus-4-5-20251101 | Anthropic | Claude Code | 50.0 |

| 6 | gpt-5.1-codex-max | OpenAI | OpenHands | 49.6 |

| 7 | claude-opus-4-6 | Anthropic | Claude Code | 49.2 |

| 8 | claude-sonnet-4-5-20250929 | Anthropic | Claude Code | 47.7 |

| 9 | gpt-5.1-codex-max | OpenAI | Codex | 41.7 |

| 10 | gemini-3-flash-preview | OpenHands | 40.5 | |

| 11 | kimi-k2.5 | Moonshot AI | OpenHands | 38.9 |

| 12 | claude-haiku-4-5-20251001 | Anthropic | OpenHands | 31.3 |

| 13 | claude-haiku-4-5-20251001 | Anthropic | Claude Code | 25.8 |

| 14 | qwen3-vl-235b-a22b-instruct | Alibaba | OpenHands | 8.3 |

* Best multimodal support for each configuration.

Citation

@misc{chi2026gamedevbenchevaluatingagenticcapabilities,

title={GameDevBench: Evaluating Agentic Capabilities Through Game Development},

author={Wayne Chi and Yixiong Fang and Arnav Yayavaram and Siddharth Yayavaram and Seth Karten and Qiuhong Anna Wei and Runkun Chen and Alexander Wang and Valerie Chen and Ameet Talwalkar and Chris Donahue},

year={2026},

eprint={2602.11103},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2602.11103},

}